tags : Algorithms, Courses

Source: Skiena’s Algorithms

Lecture 1

- We seek algorithms which are correct and efficient.

- No efficient, correct algorithm exists for the traveling salesman problem.

- Proving that an algorithm in NP complete will help you sleep well at night because you’ll know that there exists no fast algorithm for that problem vs thinking you’re being stupid while coming up with the algorithm to solve the problem.

Algorithm designer skills

- Proving Incorrectness

- We have a heuristic and then we try to disprove the correctness

- Proving incorrectness(eg. come up with counter examples, instance that breaks the algorithm)

- Heuristic: A algorithm that isn’t correct or may not be correct

- The easier and smaller the counter example is the better

- Proving correctness

- Failing to find a counter example != the algorithm is correct

- Induction is a useful way to prove correctness of recursive algorithms

- Proof by contradiction

Lecture 2

RAM Model of computation

- Enables us to design algorithms in machine independent manner

- It’s not an accurate model but it’s simple to work with and has a lot of truth to it

- We measure the run time of an algorithm by counting the number of steps.

- Assumptions

- Each “simple” operation (+, -, =, if, call) takes 1 step.

- Loops and subroutine calls are not simple operations.

- Each memory access takes exactly 1 step.

Issues with RAM model

In the RAM model of complexity

- we sometimes may have bumps in functions. i.e in different input sizes results may vary

- Complexity is typically expressed as a function of the size of the input but sometimes it’s too much detail to specify precisely

- eg.

T (n) = 12754n2 + 4353n + 834 lg2 n + 13546

- eg.

- So we instead go with Asymptotic notation using Big Oh Notation.

- See Complexity Theory

Lecture 3

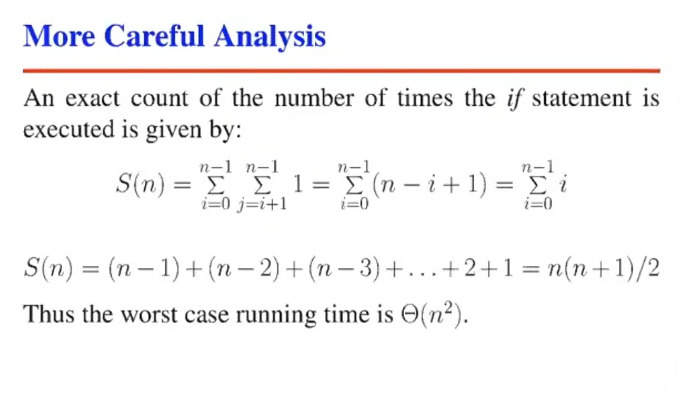

Analysis

When we say nothing infront of “running time”, we usually mean worst case.

Worst case analysis

- For

selection sort- : It’s No worse than

- : It’s No better than

- : It is

- There’s no discussion about best case etc here. i.e It’s analyzing the worst case by trying to figure out the upper and lower bounds.

DOUBT: I am still confused about how we come up with , esp for insertion sort.

Logarithm

In the context of algorithms, Logarithms reflect:

- How many times we can

doublesomething util we get ton - How many times we can

halfsomething util we get to1 - If the input halves at each step, its likely O(LogN) or O(NIogN). Eg. Binary search is , Binary tree height is